Nodes represent RDDs while edges represent the operations on the RDDs. Inside Apache Spark the workflow is managed as a directed acyclic graph (DAG). Spark's RDDs function as a working set for distributed programs that offers a (deliberately) restricted form of distributed shared memory. Spark and its RDDs were developed in 2012 in response to limitations in the MapReduce cluster computing paradigm, which forces a particular linear dataflow structure on distributed programs: MapReduce programs read input data from disk, map a function across the data, reduce the results of the map, and store reduction results on disk. The RDD technology still underlies the Dataset API.

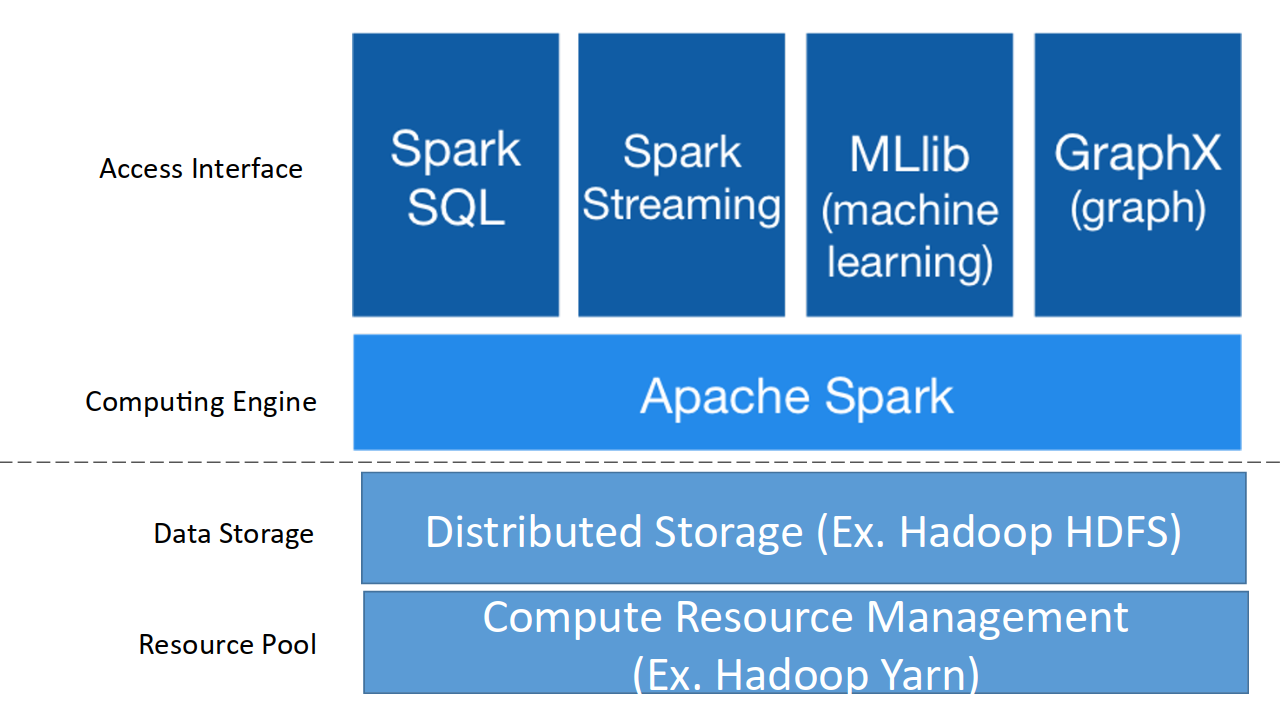

In Spark 1.x, the RDD was the primary application programming interface (API), but as of Spark 2.x use of the Dataset API is encouraged even though the RDD API is not deprecated. The Dataframe API was released as an abstraction on top of the RDD, followed by the Dataset API. Originally developed at the University of California, Berkeley's AMPLab, the Spark codebase was later donated to the Apache Software Foundation, which has maintained it since.Īpache Spark has its architectural foundation in the resilient distributed dataset (RDD), a read-only multiset of data items distributed over a cluster of machines, that is maintained in a fault-tolerant way. Spark provides an interface for programming clusters with implicit data parallelism and fault tolerance. O’REILLY Publishing ‘Learning Spark: Lightning-Fast Big Data Analysis’ Book by Holden Karau, Andy Konwinski, Patrick Wendell, Matei Zaharia: Amazon Link.Data analytics, machine learning algorithmsĪpache Spark is an open-source unified analytics engine for large-scale data processing. The Spark official site and Spark GitHub contain many resources related to Spark. It’s easy to get started running Spark locally without a cluster, and then upgrade to a distributed deployment as needs increase. The tool is very versatile and useful to learn due to variety of usages. In addition, Spark can run over a variety of cluster managers, including Hadoop YARN, Apache Mesos, and a simple cluster manager included in Spark itself called the Standalone Scheduler. These libraries solve diverse tasks from data manipulation to performing complex operations on data. Spark SQL, Spark Streaming, MLlib, and GraphX. It contains different components: Spark Core,

Spark is used for a diverse range of applications. We have checked at the end that the expected result is equal to the result that was obtained through Spark. transactions.txt input CodeĪll code and data used in this post can be found in my Hadoop examples GitHub repository. Before starting work with the code we have to copy the input data to HDFS.

Our code will read and write data from/to HDFS. usersįor this task we have used Spark on Hadoop YARN cluster. The tables that will be used for demonstration are called users and transactions. Iterative algorithms have always been hard for MapReduce, requiring multiple passes over the same data. Spark’s aim is to be fast for interactive queries and iterative algorithms, bringing support for in-memory storage and efficient fault recovery. It was observed that MapReduce was inefficient for some iterative and interactive computing jobs, and Spark was designed in response. Spark started in 2009 as a research project in the UC Berkeley RAD Lab, later to become the AMPLab. Spark is an open source project that has been built and is maintained by a thriving and diverse community of developers. The java solution was ~500 lines of code, hive and pig were like ~20 lines tops. Previously I have implemented this solution in java, with hive and with pig. To do that, I need to join the two datasets together. Given these datasets, I want to find the number of unique locations in which each product has been sold.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed